Loading blog post...

If you build APIs today, two stacks repeatedly appear in production systems:

Node.js

FastAPI

Both are widely known for handling high-concurrency workloads, and both rely heavily on event-driven architectures.

At first glance, the two appear almost identical:

Both rely on non-blocking I/O

Both encourage asynchronous programming

Both can handle thousands of concurrent connections

Because of these similarities, many developers assume they behave in roughly the same way internally.

But the reality is more nuanced!

While the high-level philosophy is similar, the execution model, scheduling behavior, and CPU utilization strategy differ significantly.

Understanding these differences becomes extremely important when designing:

large-scale APIs

real-time systems

microservices

AI inference backends

streaming infrastructures

## Architectures

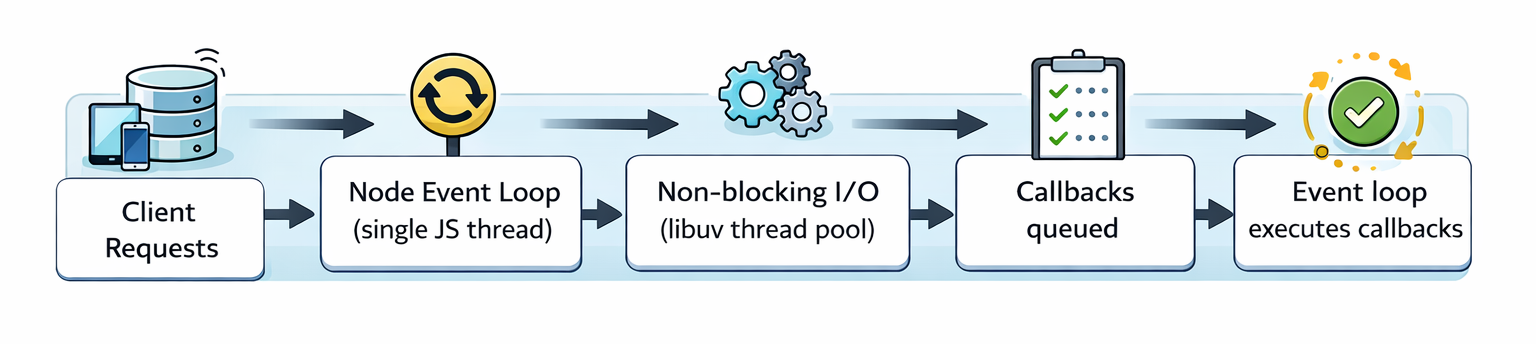

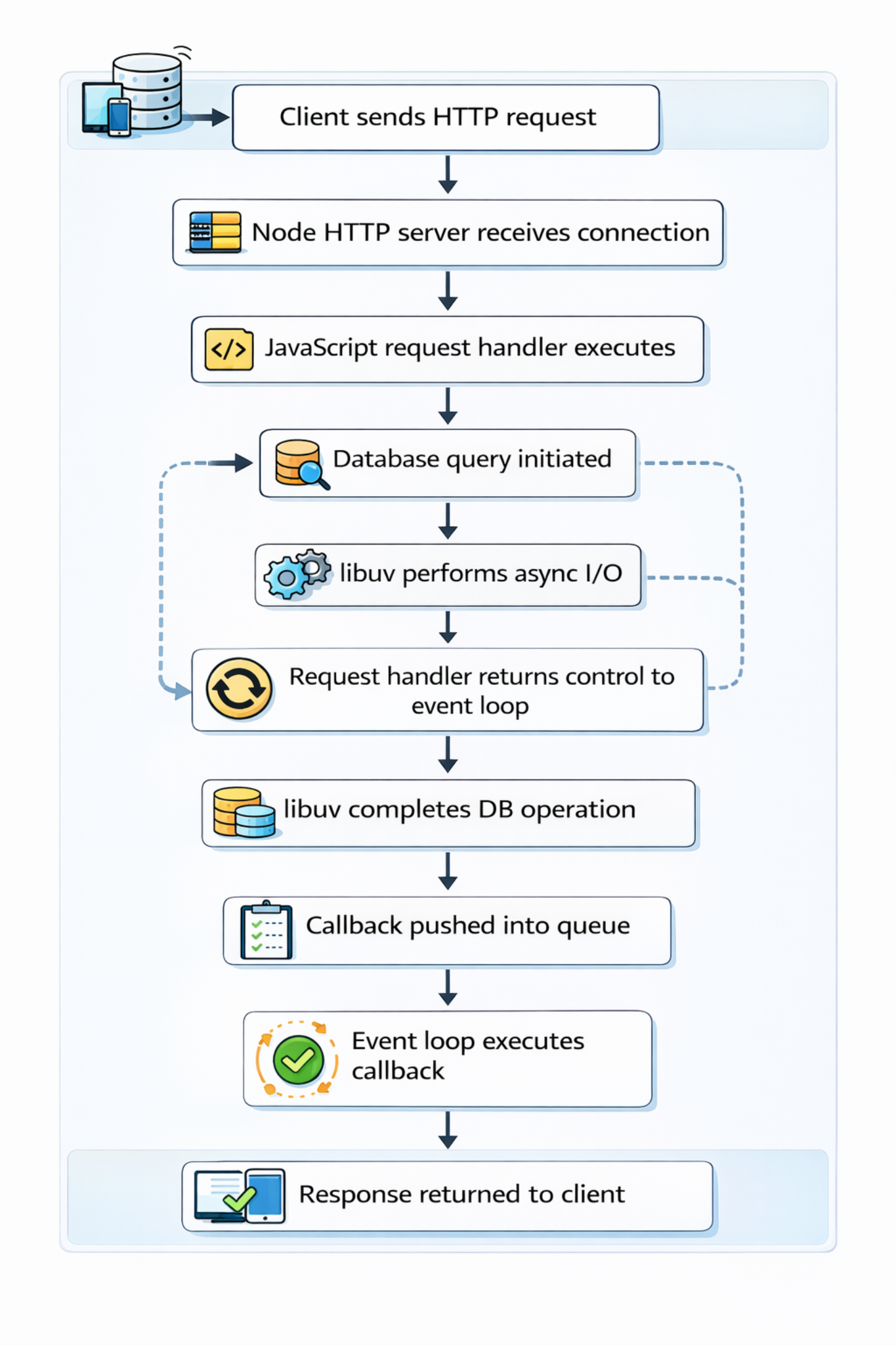

Node.js executes JavaScript on a single thread.

This design decision was intentional. JavaScript historically ran inside browsers where concurrency was handled through event-driven callbacks rather than threads.

Node adopted the same model.

Important characteristics:

All JavaScript code runs on one thread

I/O operations are delegated to libuv

When I/O completes, callbacks are pushed back into the event loop

This design works extremely well for I/O-heavy workloads, which most web servers are.

Instead of spawning thousands of threads, Node maintains a single event loop and multiplexes many connections.

But there is a trade-off. If CPU-heavy work blocks the JavaScript thread, the entire server stalls.

Simple Example -

while(true) {}

The Node.js event loop is implemented through libuv, a high-performance C library responsible for:

asynchronous I/O

networking

file system operations

timers

thread pool scheduling

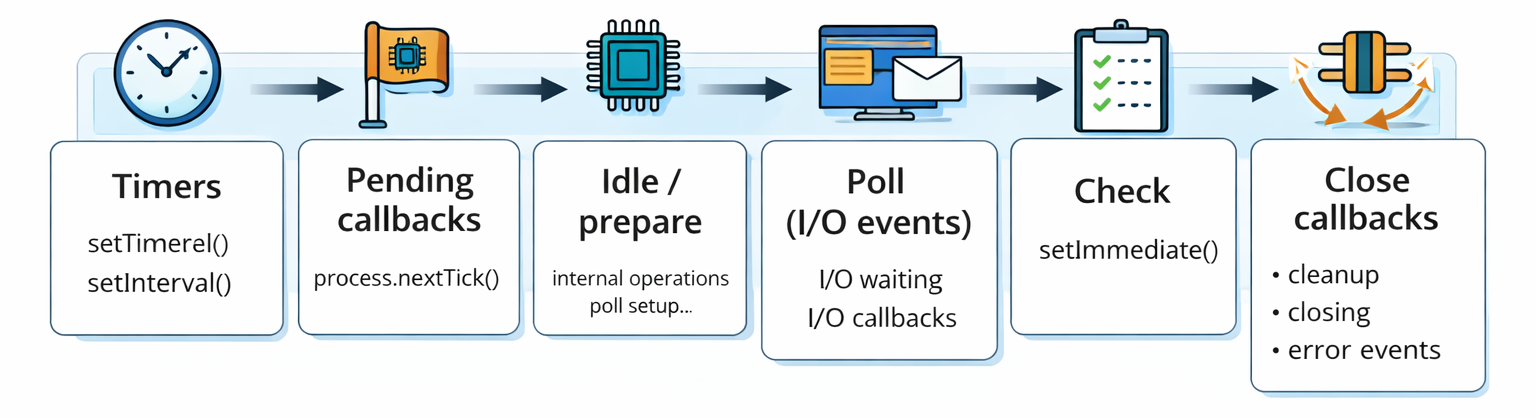

The event loop itself runs through several distinct phases.

Timers Phase: Handles callbacks scheduled via:

setTimeout()

setInterval()

Pending Callbacks: Processes certain system-level callbacks such as TCP errors.

Poll Phase: This is the heart of the event loop. In this phase, Node:

waits for I/O events

retrieves completed operations from the OS

executes the associated callbacks

Check Phase: Executes callbacks

Close Callbacks: Handles cleanup events

Lets talk about FastAPI.

FastAPI itself is just a framework.

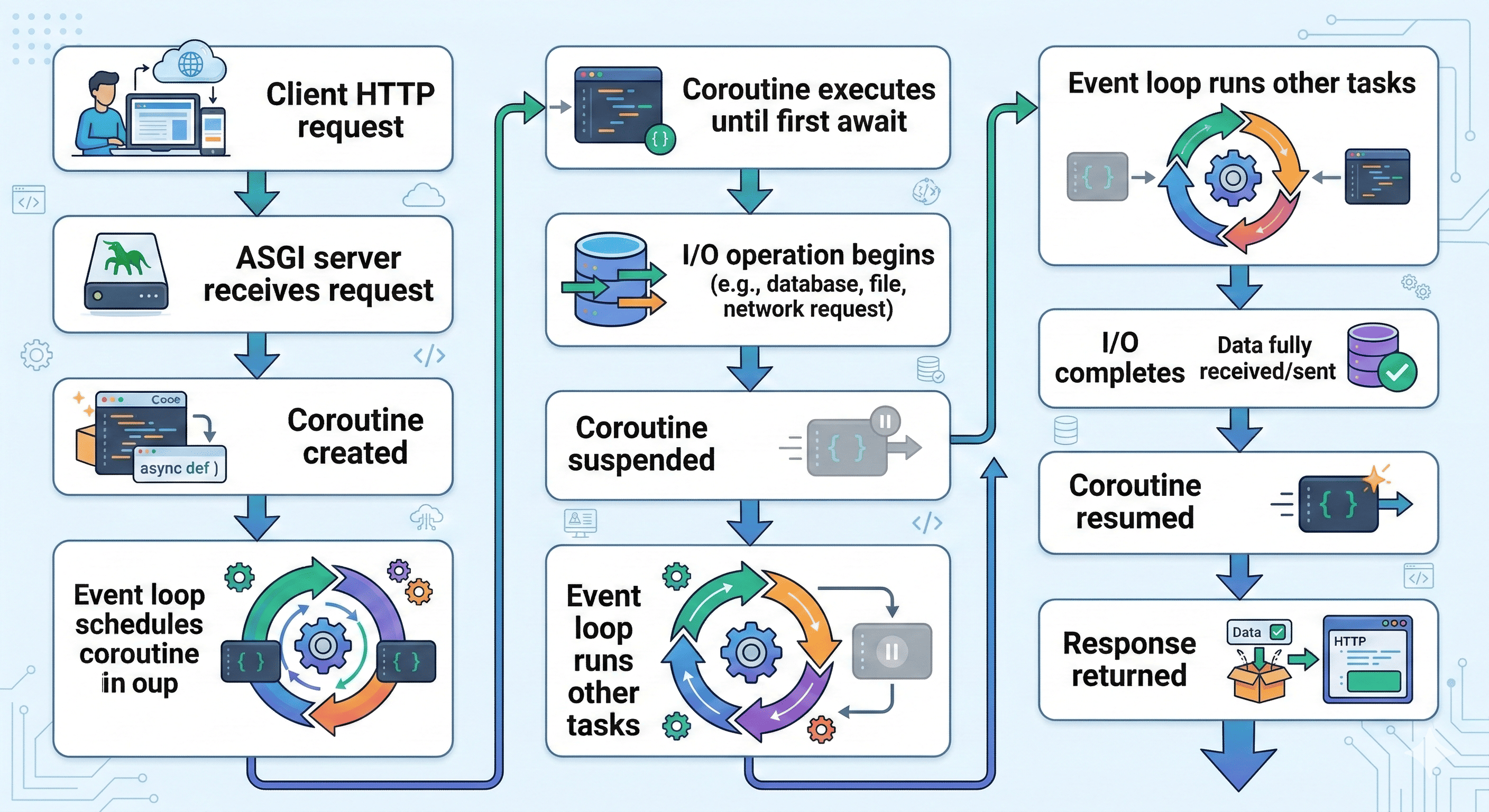

The real runtime comes from ASGI servers, typically:

Uvicorn

Hypercorn

Gunicorn + Uvicorn workers

Each worker is:

a separate OS process

running its own Python interpreter

with its own event loop

Concurrency therefore comes from two layers:

Async coroutines inside the worker

Multiple worker processes across CPU cores

This means FastAPI can utilize multiple CPU cores naturally(Node requires cluster mode to achieve)

FastAPI relies on Python's asyncio framework, which implements asynchronous execution using coroutines rather than callbacks. A typical endpoint might look like this:

@app.get("/users")

async def get_users():

result = await db.fetch_all()

return result

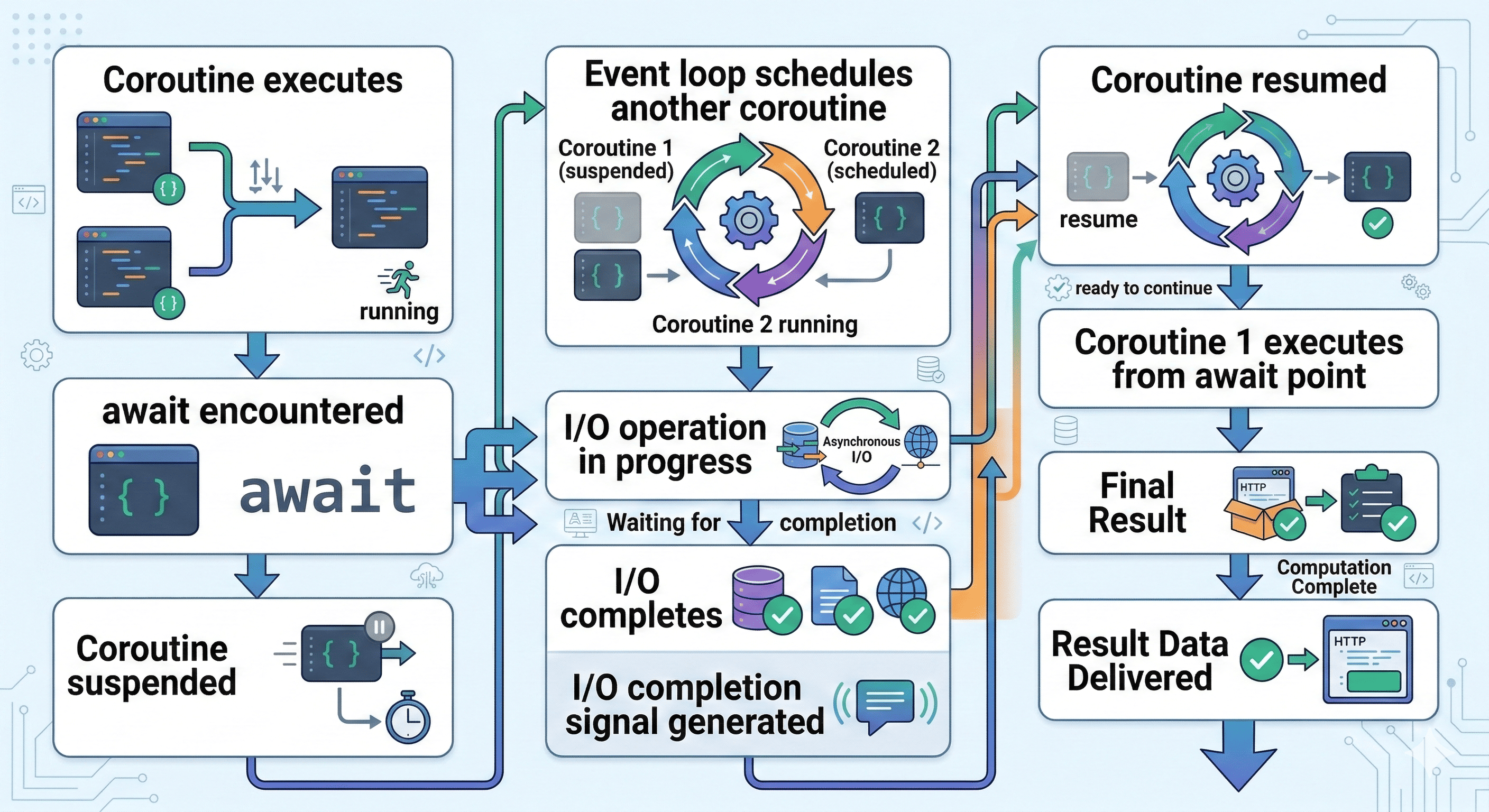

Python creates a coroutine object, which behaves like a pausable computation. The event loop schedules and resumes these coroutines as needed. Instead of registering callbacks, Python pauses the coroutine and later resumes it.

This produces a more linear and readable programming model.

Callback vs Coroutine Model

This is where the programming model diverges significantly.

Early Node applications relied heavily on callbacks.

db.query(sql, (err, result) => {

console.log(result)

})

This led to the infamous callback hell problem.

Later improvements introduced:

Promises

async / await

const result = await db.query(sql)

However, internally Node still schedules operations via callback queues. The async/await syntax is largely syntactic sugar over Promises and callbacks.

In the other side, Python’s async system is built around coroutines and cooperative scheduling.

result = await db.query()

Coroutines can pause and resume naturally, complex asynchronous flows often become much easier to reason about.

Threads

Another important architectural distinction is thread utilization.

Node consists of: 1 main JavaScript thread + libuv worker thread pool

The worker pool handles operations such as:

file system access

DNS lookups

compression

cryptography

Default thread pool size: 4 threads

This can be increased using: UV_THREADPOOL_SIZE

However, JavaScript execution itself remains single-threaded.

Even with a larger thread pool, heavy CPU tasks executed in JavaScript can still block the event loop.

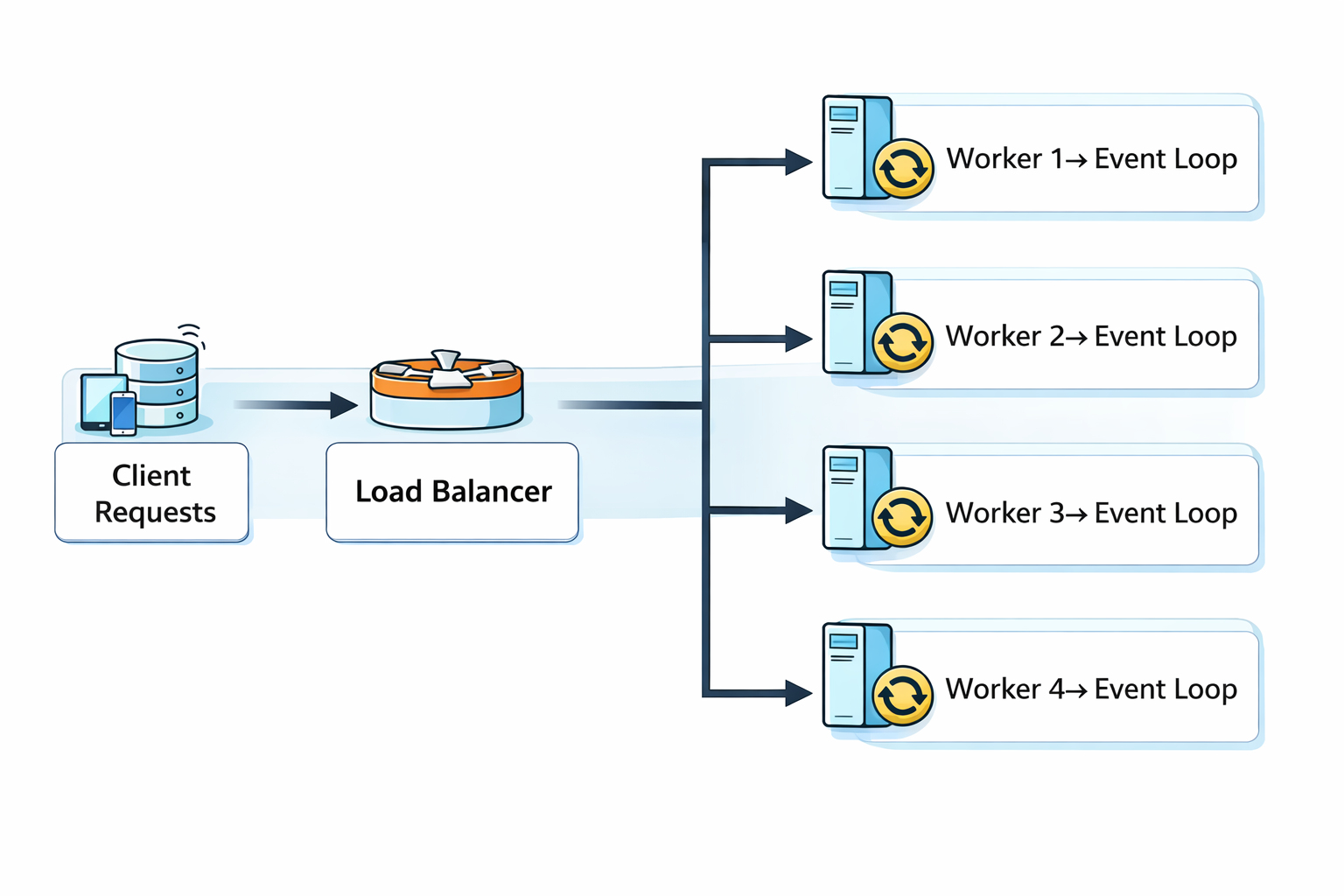

FastAPI deployments typically rely on multiple worker processes: Gunicorn + 4 Uvicorn workers

Each worker contains:

1 OS process

1 Python interpreter

1 event loop

The result:

Worker 1 → CPU core 1

Worker 2 → CPU core 2

Worker 3 → CPU core 3

Worker 4 → CPU core 4

This enables true parallelism across CPU cores, something Python normally struggles with because of the Global Interpreter Lock (GIL).

But since each worker is a separate process, the GIL becomes irrelevant.

But One common misunderstanding is that FastAPI automatically runs everything asynchronously. That is not true.

Consider this endpoint:

def endpoint(): ...

This is not asynchronous. FastAPI will run it inside a thread pool executor.

In this case: Blocking function -> Executed in thread pool -> Event loop remains free

To use the event loop directly, endpoints must be written as:

async def endpoint():

Understanding the full stack layers also helps.

Nodejs: V8 Engine -> Node.js Runtime -> libuv -> Operating System

V8 executes JavaScript. libuv handles async I/O

FastAPI: Python Interpreter -> FastAPI Framework -> ASGI -> Uvicorn Server -> asyncio / uvloop -> Operating System

A interesting detail: Many FastAPI deployments use uvloop, an event loop implementation written in C.

And fun thing is, uvloop is built on top of libuv — the same library used by Node.js.

So in many modern deployments:

FastAPI + uvloop = Python + libuv

Different language. Same underlying asynchronous engine.

So, Both Node.js and FastAPI follow the same fundamental philosophy:

event-driven, non-blocking I/O.

But they scale concurrency in different ways.

Node.js -

single JavaScript execution thread

libuv thread pool for I/O

parallelism requires cluster mode or worker threads

FastAPI

asyncio coroutines

multiple worker processes

natural multi-core scalability

Now we can summarize the concurrency strategy of each runtime.

Node.js Concurrency model: Single-threaded event loop + Non-blocking I/O

This works exceptionally well for:

APIs

streaming services

real-time applications

WebSocket servers

Node excels at:

high I/O throughput

real-time systems

streaming pipelines

WebSocket infrastructure

Typical use cases:

chat servers

API gateways

live dashboards

collaborative applications

Node also benefits from a massive ecosystem and mature tooling.

However, CPU-heavy tasks can block the event loop.

large JSON parsing

image processing

encryption

machine learning inference

These tasks often require:

worker threads

microservices

background job systems

In the other hand, FastAPI concurrency combines: async coroutines + multiple worker processes

This creates a system where:

Worker 1 → blocked by CPU task

Worker 2 → still serving requests

Worker 3 → still serving requests

Worker 4 → still serving requests

The system remains responsive because workload is distributed across processes.

Major advantages include:

seamless integration with machine learning frameworks

strong type hints

automatic request validation via Pydantic

excellent developer ergonomics

Performance is surprisingly strong.

Both Node.js and FastAPI are powerful tools. The right choice often depends on the problem you're solving.

Node.js is the right choice when building real-time systems and working within the JavaScript ecosystem.

FastAPI will be a beast when building services around Python, data science, machine learning, or AI workloads.

What matters most is understanding how their concurrency models actually behave under load because architecture decisions made early tend to shape how systems scale later.