Loading blog post...

Netflix, founded in 1997 as a DVD rental service, transformed into the world's leading streaming platform by 2026, serving over 280 million subscribers across more than 190 countries. It delivers billions of hours of video content monthly, accounting for a significant portion of global internet traffic—up to 15% in some regions. This case study examines how Netflix operates at scale, with a focus on its use of distributed networks to minimize latency and ensure seamless streaming. We'll explore the end-to-end architecture, from content ingestion to delivery, highlighting why distributed systems are essential and how they achieve near-zero perceived latency for users.

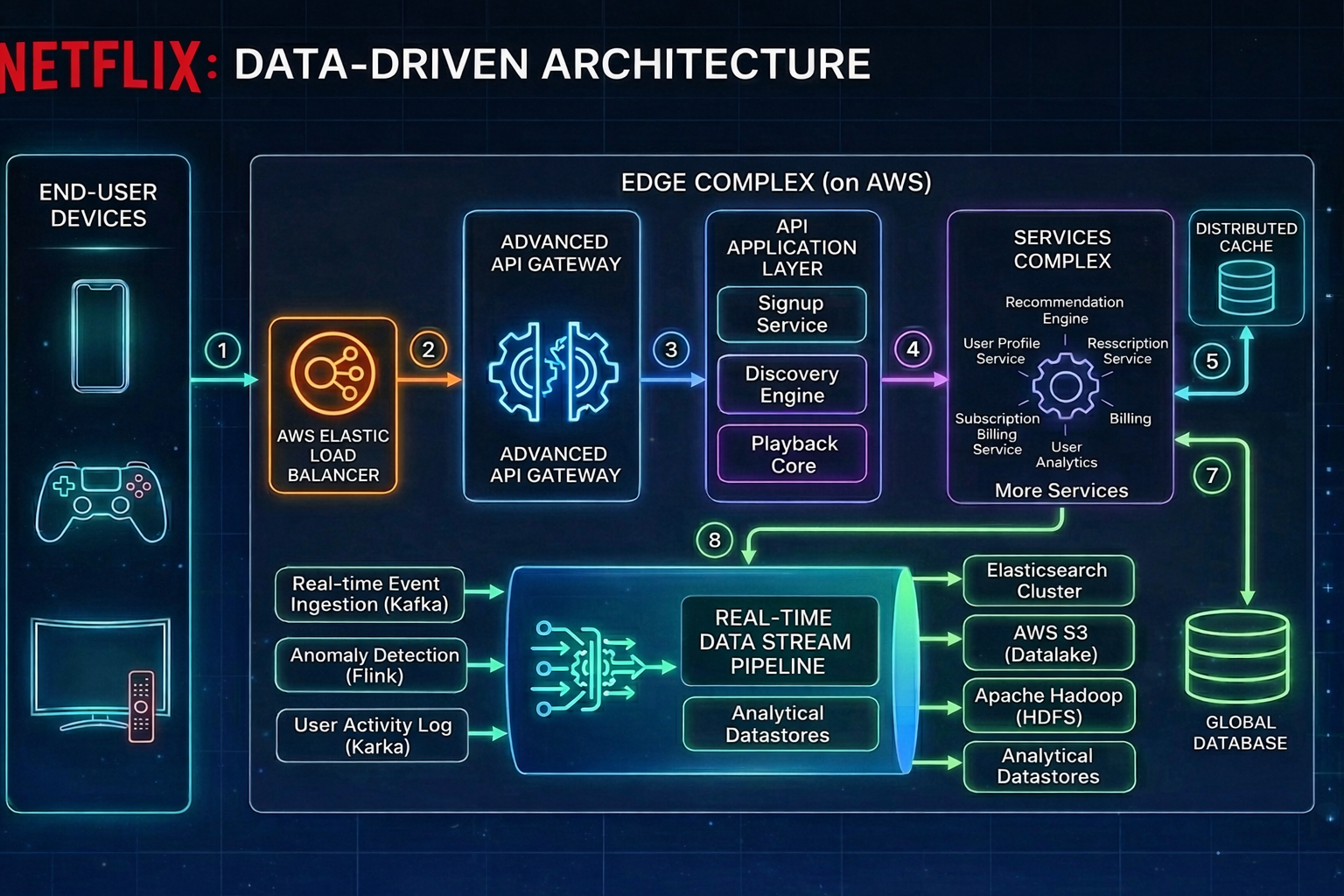

Netflix's success hinges on a cloud-native, microservices-based architecture running primarily on Amazon Web Services (AWS), complemented by its proprietary Content Delivery Network (CDN) called Open Connect. This setup allows Netflix to handle peak loads exceeding 100 terabits per second (Tbps) while maintaining high availability and personalization. Key challenges addressed include handling massive data volumes, ensuring fault tolerance, and optimizing for diverse devices and network conditions.

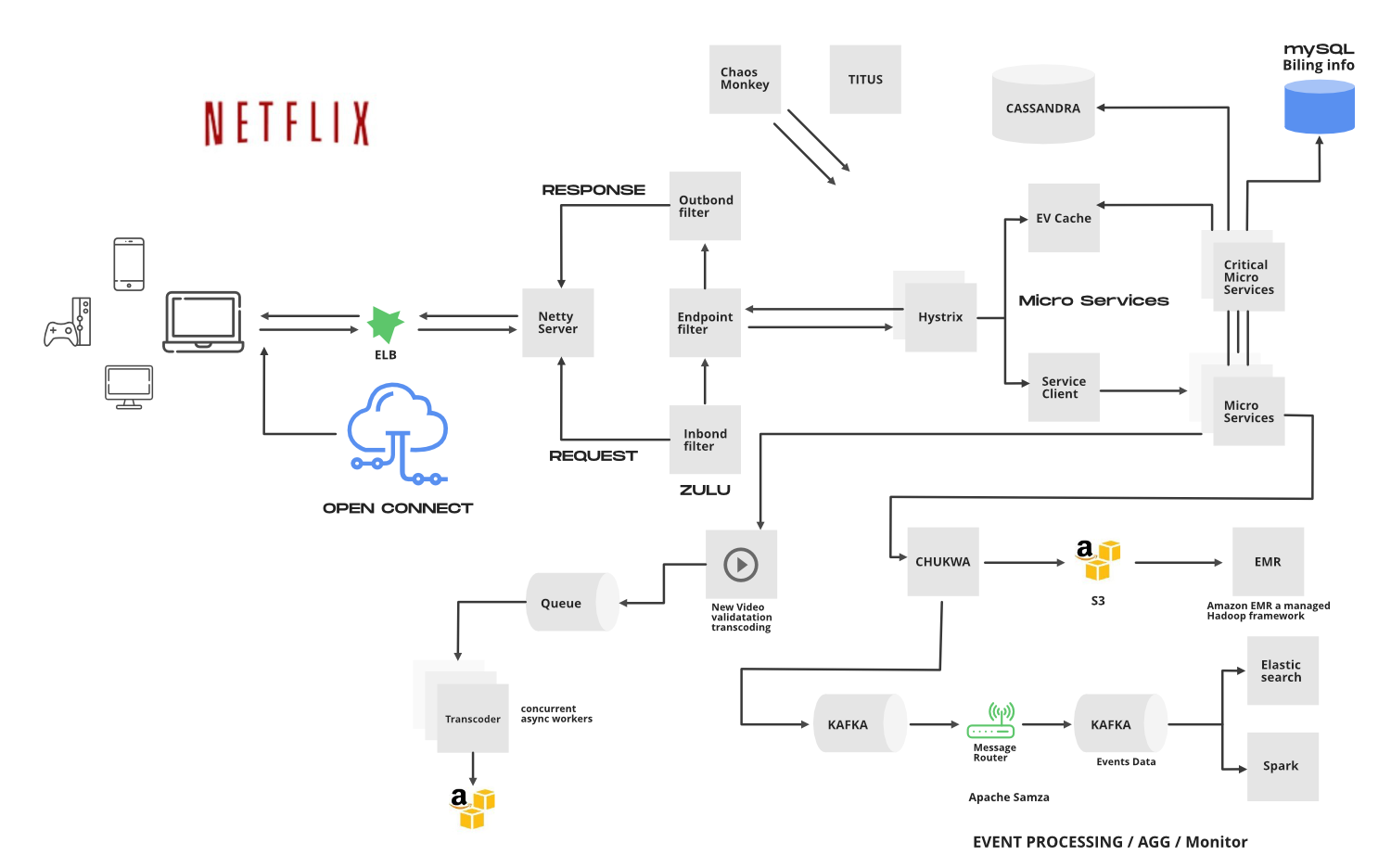

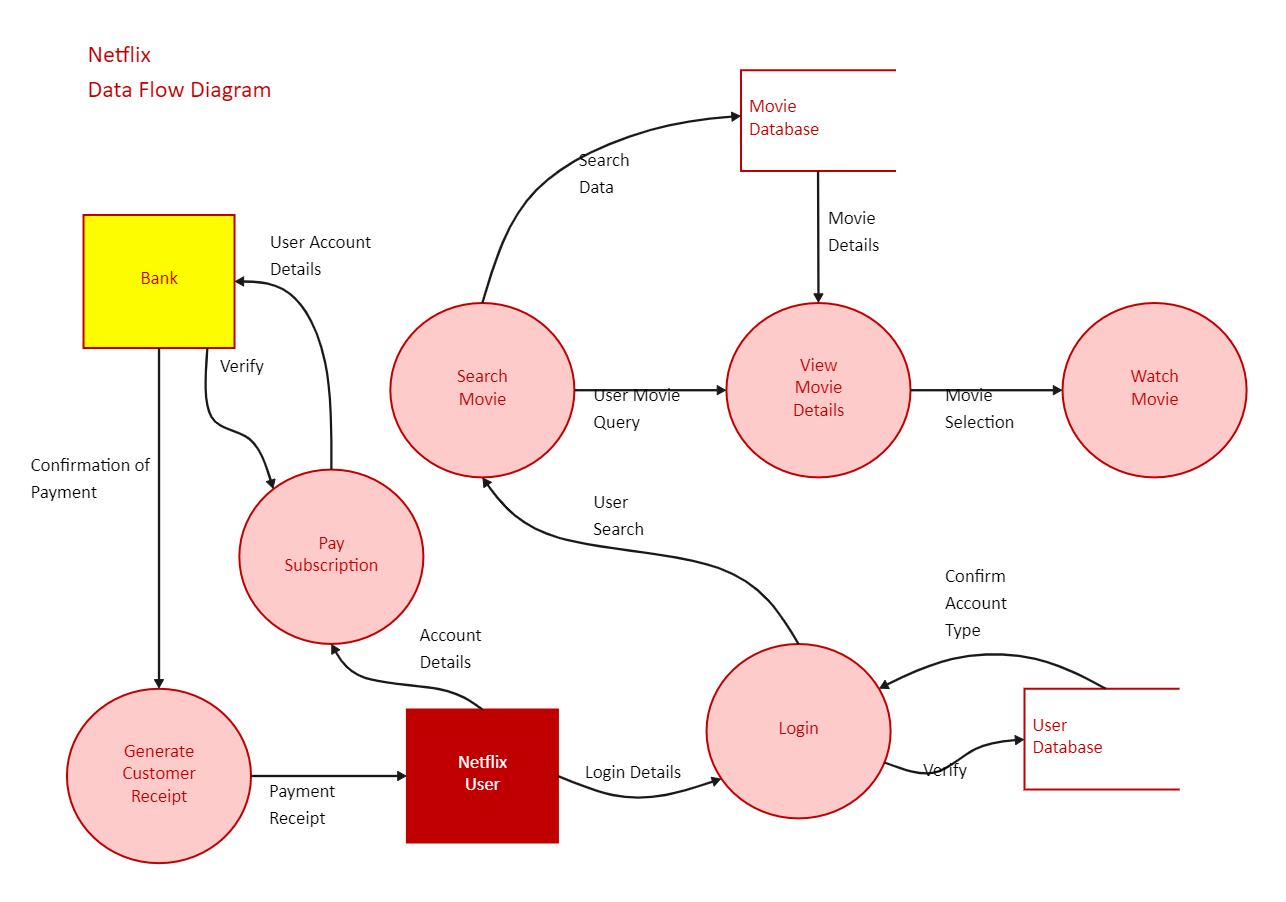

Netflix's architecture is divided into client-side applications (apps on TVs, phones, etc.), backend services on AWS, and the Open Connect CDN for content delivery. The backend uses microservices for tasks like user authentication, recommendations, and metadata management, while video streaming is offloaded to the CDN to avoid bottlenecks.

A high-level view shows user requests routing through AWS Elastic Load Balancers (ELB) to API gateways, which orchestrate calls to services like signup, playback, and discovery APIs. Data is stored in databases such as Cassandra for scalability, MySQL for billing, and caches like EVCache for fast access. Stream processing pipelines, often using Kafka and Apache Spark, handle real-time events like viewing sessions.

Content Ingestion and Processing

Content starts with ingestion from studios or production teams. Netflix receives master files, which are transcoded into multiple formats (e.g., resolutions from 240p to 4K, codecs like AV1, HEVC) to support adaptive bitrate streaming. This process uses AWS services like S3 for storage, EMR (Elastic MapReduce) for distributed processing, and tools like Chukwa for logging.

Transcoding Pipeline: Videos are queued and processed concurrently by asynchronous workers. Validation ensures quality, then files are packaged with DRM (Digital Rights Management) encryption.

Metadata Generation: Details like subtitles, audio tracks, and thumbnails are created and stored in databases.

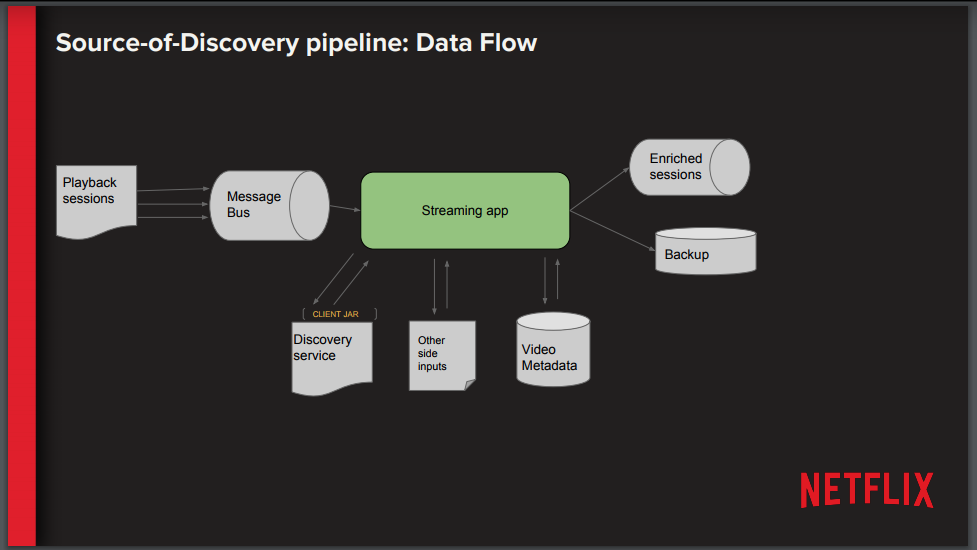

Event Processing: Kafka routes events to monitoring systems (e.g., Apache Samza) for analytics, feeding into recommendation engines.

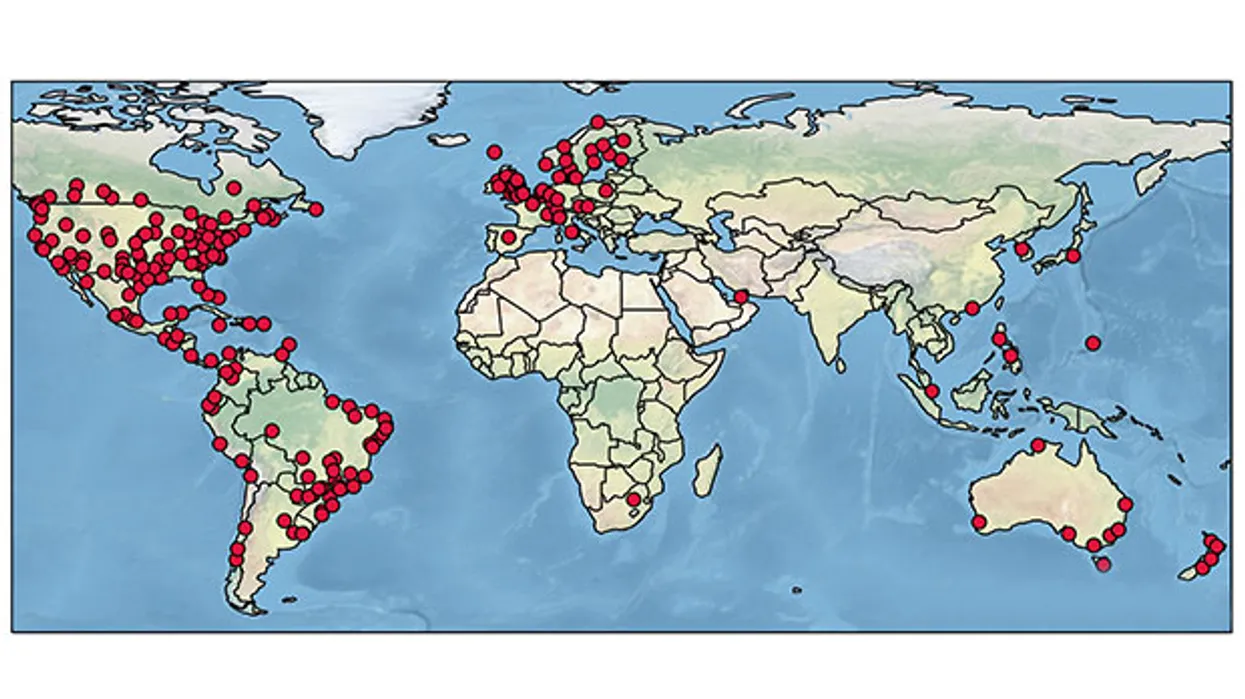

Netflix initially relied on third-party CDNs like Akamai, but as traffic grew to petabyte scales, costs and control issues arose. In 2011, Netflix launched Open Connect, a custom CDN, to address these. Open Connect is a distributed network of over 18,000 servers (Open Connect Appliances or OCAs) in more than 6,000 locations across 142 countries, embedded directly in Internet Service Providers (ISPs) or at Internet Exchange Points (IXPs).

Using Distributed Networks:

Scalability: Centralized servers can't handle Netflix's 125+ million hours of daily viewing. Distributed OCAs cache content locally, achieving ~98% cache hit rates, reducing origin server load to just 2% of traffic (from 73 Tbps edge to ~1.46 Tbps origin).

Cost Efficiency: By partnering with ISPs, Netflix avoids transit fees. Embedded OCAs save ISPs ~$1.2 billion annually by offloading traffic from upstream networks.

Reliability: Proactive caching (pre-positioning popular content overnight during low-usage "fill windows") minimizes demand-driven fills. Redundancy across sites ensures failover.

Collaboration with ISPs: Netflix provides OCAs free to qualifying ISPs, who handle power and space. This cooperative model localizes traffic, freeing ISP infrastructure for other uses like live streaming.

Global Reach: OCAs are placed strategically—large ones at regional hubs, smaller at local edges—to cover diverse geographies.

Open Connect uses FreeBSD for its OS, optimized with nginx for high-throughput delivery. Content updates propagate via a dedicated backbone network connecting to AWS regions.

Netflix aims for sub-second start times and zero buffering, even on variable networks. Latency is minimized through:

1. Proximity-Based Delivery

OCAs are embedded in ISPs, so content travels minimal hops (often within the same data center). This cuts round-trip times from hundreds of milliseconds (transit) to tens (local).

Steering logic in Netflix apps selects the nearest OCA based on geolocation and network metrics.

2. Adaptive Bitrate Streaming (ABR)

Videos are segmented into 2-10 second chunks at various bitrates. Clients dynamically switch based on bandwidth, using algorithms to predict and prefetch.

Encoding improvements (e.g., 200% more hours per GB over five years) reduce data needs, easing network strain.

3. Optimized Protocols and Caching

HTTP/2 or QUIC for faster connections.

Predictive pre-fetching: Based on viewing trends, content is cached proactively.

Edge Computing: OCAs handle manifests and encryption locally.

4. Live Streaming Enhancements

For live events (e.g., sports), Netflix uses a custom Live Origin server connecting to top-tier OCAs. Ingest via AWS MediaConnect/MediaLive transcodes feeds in real-time, publishing segments to distributed edges for synchronized low-latency delivery (under 1 minute end-to-end).

5. Monitoring and Chaos Engineering

Tools like Chaos Monkey simulate failures to ensure resilience.

Real-time metrics adjust routing, avoiding congested paths.

In practice, this results in average latencies below 100ms for most users, with buffering incidents rare.

While Open Connect handles video, AWS powers the backend:

Microservices: Over 700 services (e.g., Zuul for routing, Hystrix for fault tolerance) enable independent deployment.

Databases: Cassandra for distributed storage, handling petabytes of user data.

Big Data: Hadoop/Spark for analytics, Kafka for event streaming.

Auto-Scaling: EC2 instances scale with demand, using Titus for container orchestration.

This hybrid model (AWS for compute, Open Connect for delivery) ensures flexibility.

Challenges and Innovations

Peak Traffic: Handled via over-provisioning and predictive scaling.

Global Regulations: Region-specific caching for content restrictions.

Sustainability: Efficient encoding reduces energy use.

Future: Expanding live capabilities and AI-driven optimizations.

Netflix's architecture exemplifies distributed systems engineering, using Open Connect to bring content closer to users, slashing latency and costs. This case study shows how proactive caching, ISP partnerships, and cloud integration create a resilient platform serving millions flawlessly. By 2026, Netflix continues innovating, pushing boundaries in streaming technology.